Feds Get Involved Following Tesla Autopilot Death

Autonomous self-driving car with head up display, navigation and digital speedometer is on the road. Inside view. Vector illustration

A fatal crash involving a Tesla equipped with an autonomous-driving system has prompted not only a wrongful-death lawsuit by the family of the driver but also a federal probe into the vehicle maker’s much-scrutinized technology.

Tesla’s autopilot technology is a breakthrough in artificial intelligence use in cars and other vehicles, but is it technology that is ripe for “prime time” yet? How does the technology work?

Tesla’s autopilot feature utilizes the following to gather the data needed to work:

- A forward-facing radar

- Cameras

- Cameras provide visibility up to 250 meters away

- A high-precision digitally-controlled electric assist braking system

- 12 long-range ultrasonic sensors positioned around the car perimeter

- Sensors capable of sensing everything within 16 feet from the vehicle

- Cruise Control

- Auto-steering

What can affect the Tesla autopilot system:

- Sensors can be affected if there is debris covering them.

- Cameras can be affected if there is debris covering them.

- Autopilot does not work well when the road markings are not clear.

- Forward-facing radar is not able to detect objects approaching from the side.

- Driver still needs to pay attention and keep their hands on the wheel; if they do not, the car slows and stops.

So, the bottom line is that Tesla’s “autopilot” feature is just a driver assist set of features and the driver is not eliminated from the function by much.

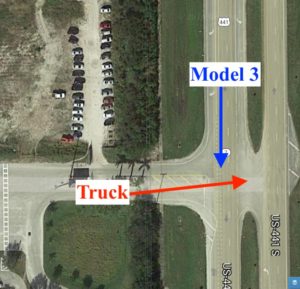

The fatal crash resulted in a lawsuit on behalf of Jeremy Banner, who died after colliding with a semitruck in Delray Beach, claims that the Autopilot feature was defective. Banner reportedly switched it on 10 seconds before the crash, which occurred because it failed to detect the semitruck and take the evasive action as it was designed to do.

“The Palm Beach County Sheriff’s Office said the tractor trailer pulled into the path of the Tesla, and the Tesla’s roof was sheared off as it passed underneath the semi,” according to a story on WPTV-TV’s Web site titled “NTSB releases new report on deadly Tesla crash in Palm Beach County.” “Banner died at the scene.”

TC) to investigate the company for violation of Section 5 of the FTC Act that protects against “unfair or deceptive acts or practices in or affecting commerce.”

“An act or practice is unfair where it causes or is likely to cause substantial injury to consumers; cannot be reasonably avoided by consumers; and is not outweighed by countervailing benefits to consumers or to competition,” the legislation states. “Public policy, as established by statute, regulation, or judicial decisions may be considered with all other evidence in determining whether an act or practice is unfair. An act or practice is deceptive where a representation, omission, or practice misleads or is likely to mislead the consumer; a consumer’s interpretation of the representation, omission, or practice is considered reasonable under the circumstances; and the misleading representation, omission, or practice is material.”

One group, the Center for Auto Safety headed by Jason Levine, believes Tesla is, at best, guilty of deceptive practices because the company asserts that its Model 3 sedan boasts the smallest crash risk of any vehicle on the market – a high and mighty statement.

“We feel Tesla violates the laws on deceptive practices, both at the federal and state level,” Levine told CNBC in a story titled “Safety groups want FTC, state probes of Tesla’s Autopilot system – and its marketing efforts.” “They can say they’ve written language to cover their liabilities but their actions portray a desire to deceive consumers.”

Tesla CEO Elon Musk no doubt is motivated by the race to develop, advertise and sell the first so-called driverless vehicle in America. Experts predict 2020 is the finish line, and – among the multiple manufacturers in the running – whoever hits the road that year wins.

“First introduced in October 2015, Autopilot is what is known, in industry terms, as an Advanced Driver Assistance System, or ADAS,” the CNBC story states. “A number of other manufacturers have launched similar technologies, such as Cadillac’s Super Cruise and Audi’s Traffic Jam Pilot. Some systems can, under very limited circumstances, permit a driver to briefly take hands off the wheel. They all require the motorist to be ready to take immediate control in an emergency.”

The National Highway Traffic Safety Administration (NHTSA) objects to Tesla’s crash statistic and has pushed the company to stop characterizing its cars in a false light.

“NHTSA has established guidelines for how companies should talk about its crash tests,” Consumer Reports’ Jeff Plungis writes in a story titled “Feds Say Tesla Exaggerating Model 3 Crash-Test Results.” “The agency warns automakers about making statements about the comparative safety of different types of vehicles – especially those in different weight classes. Those types of statements can confuse consumers and undermine the crash-testing program, which aims to promote the sale of vehicles with high levels of safety, says David Friedman, vice president of advocacy for Consumer Reports.”

“The rules are there so consumers can make informed decisions,” Friedman says in the story. “Tesla doesn’t get to pick which ones apply to them.”

Meanwhile, the low-crash-risk talking point remains on Tesla’s Web site.

“Based on the advanced architecture of Model S and Model X, which were previously found by the National Highway Traffic Safety Administration (NHTSA) to have the lowest and second lowest probabilities of injury of all cars ever tested, we engineered Model 3 to be the safest car ever built,” reads a blog titled “Model 3 achieves the lowest probability of injury of any vehicle ever tested by NHTSA.” “Many companies try to build cars that perform well in crash tests, and every car company claims their vehicles are safe. But when a crash happens in real life, these test results show that if you are driving a Tesla, you have the best chance of avoiding serious injury.”

Bottom line, the development of the law and regulation when it comes to a car that truly drives itself will be a continuing path of development. There all sorts of issues to work out in terms of liability and technology. Can’t wait for the Jetsons – too young to remember? Google “George Jetson”.

Share This